Company or ticker...

The Best Platform For

Active Investors

"I always print your posts and study it one by one."

Trusted by +

investors

What’s Inside

Turn Noise into Action

Investing starts with a lot of confusing information.

The Fullstack Investor helps you spot the patterns that reveal the structure.

Noise becomes structure, and structure leads to action.

Thematic Portfolios

Space

The Space Economy

Founder-Led Companies

Bet on the Visionaries

Quantum Computers

The Future of Computing

Nuclear

The Future of Energy

Rare Earth Elements

The Metals Behind Everything

Drones

The Future of Warfare

Air Taxis

The Future of Air Mobility

Coming Soon

AI Agents

The Future of Work

Humanoid Robots

The Future of Labor

AI Infrastructure

Powering the Future

Autonomous Vehicles

The Future of Mobility

Portfolio Tracker

Action

Ticker

Price

Date

Return

Setup

Buy

ORCL

$

176.38

41.06%

Breakout

Real-Time Market Updates

Lin

Market Update: The Secret AI Model

AI is one of the biggest opportunities of this decade, and likely the defining one. It is reshaping every industry, changing how work gets done, how decisions are made, and how value is created. This is the leading sector in the market right now, and that is not by coincidence. The demand for compute keeps rising and there is no sign of it slowing.

AI systems require enormous computational power. Training models, running real-time inference, operating AI agents, generating images and video, controlling robots, and enabling autonomous vehicles all depend on massive data center capacity, advanced chips, high-bandwidth networking, and reliable energy infrastructure. The more capable these systems become, the more compute they consume. Most people still underestimate this.

And this is still early. AI is not just a tool. It is a new form of intelligence that can plan, reason, and solve complex problems. As these systems move from research environments into everyday products, enterprise workflows, and real physical applications, compute requirements will expand dramatically from here.

There is practically no limit to how much intelligence we can use.

And now Anthropic just did something you almost never see in tech. They built a new secret AI model called Claude Mythos, and it’s so good at cybersecurity that they think it could actually be dangerous to release. Claude Mythos blew past existing benchmarks and showed a huge jump in autonomous hacking ability.

So, instead of launching it like a normal product, they’re holding it back and sharing it with selected partners. The idea is to strengthen global systems first, before tools like this fall into the wrong hands.

It clearly shows that we’re not even close to the limit of what these models are capable of much much more. And hence we’ll need more AI compute.

That’s why this continues to be the most important theme. And companies across chips, cloud, hardware manufacturing, networking, and power systems are seeing incredible growth. Recent earnings have confirmed how strong demand really is. Many suppliers are reporting record orders, multi-year backlog commitments, and faster deployment cycles. Even second-order players such as design tools, memory, cooling, and optical components are benefiting as the ecosystem scales.

That is why the AI infrastructure theme continues to lead. If you have not yet explored the AI infrastructure, semiconductor, and photonics portfolios, it is critical to be taking a look.

The companies that did pull back are already recovering quickly. And many of the strongest names barely moved at all, while many growth stocks were selling off.

So, here are a few on the key names and subsectors to keep an eye on right now. Many of them should be already familiar but I wanted to highlight them anyway.

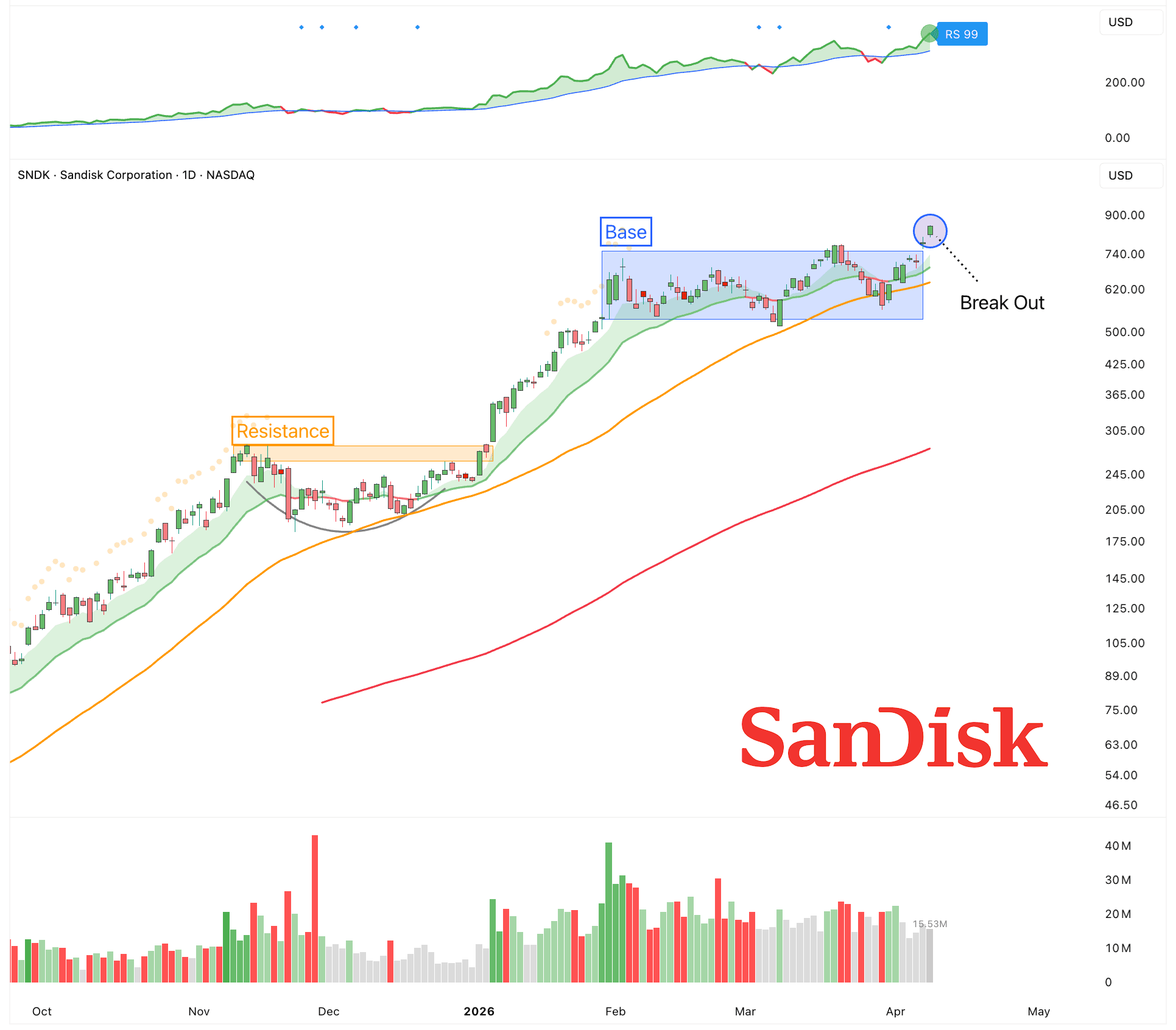

Memory & Storage

Storage is becoming a critical part of the AI infrastructure stack.

Training and running large models requires moving massive amounts of data quickly, which puts pressure on both capacity and speed. Solid-state drives are increasingly used because they offer much faster read and write performance than traditional hard drives, which helps keep GPUs fully utilized instead of waiting for data. Hard drives still matter for long-term data retention and training datasets.

Sandisk ($SNDK)

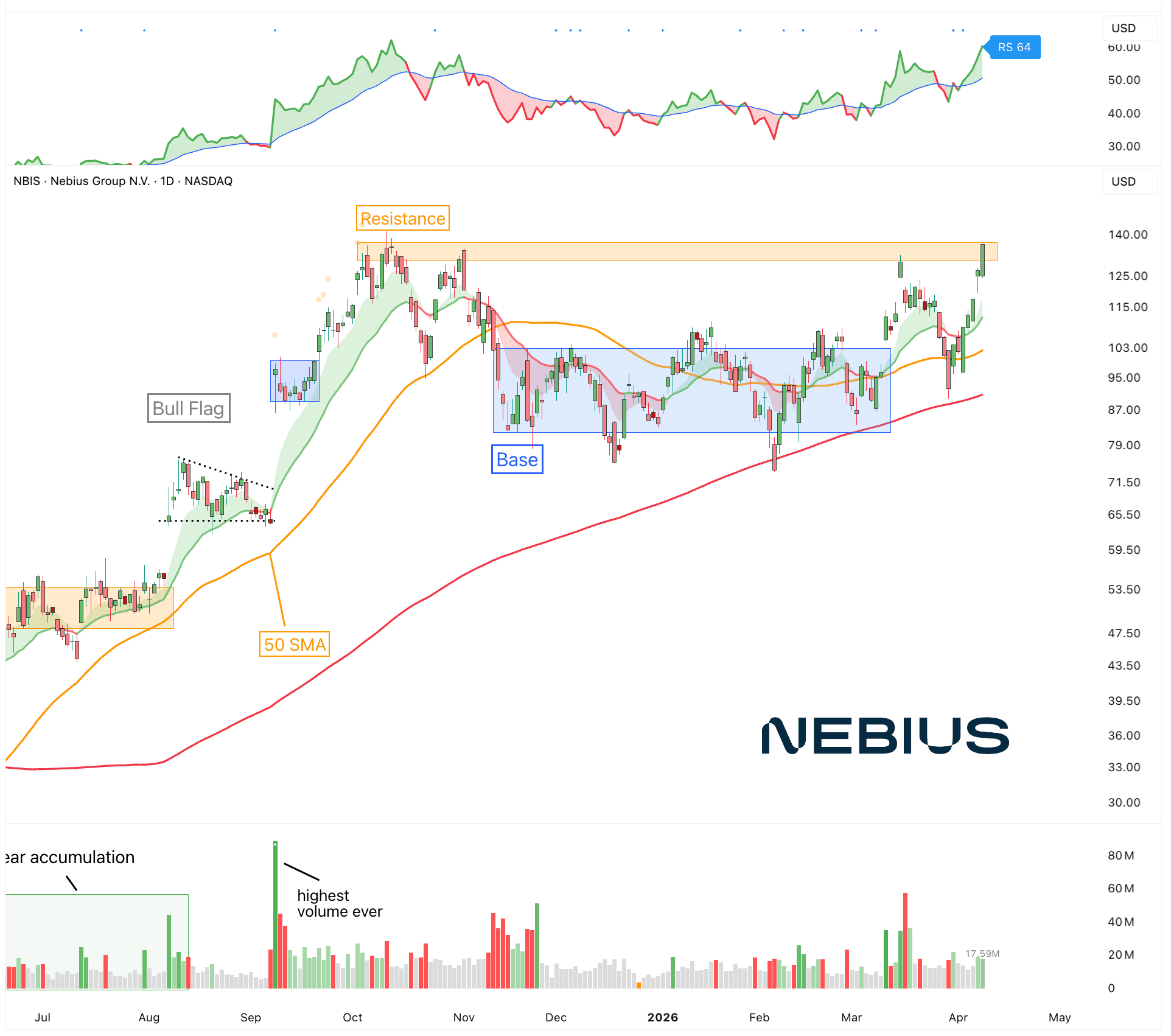

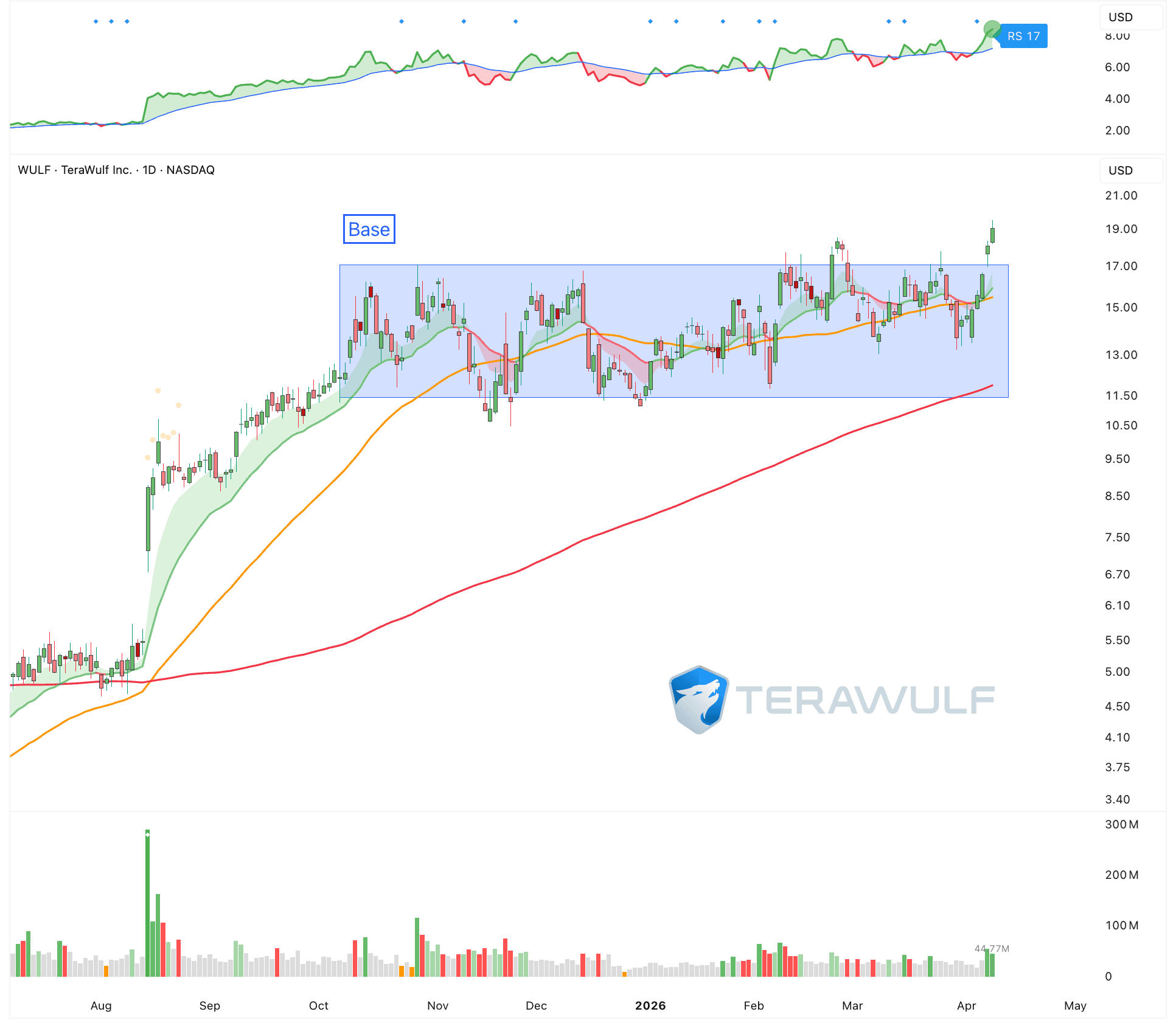

Neocloud Providers

The new generation of cloud providers are at the cire of the AI boom.

They supply the physical infrastructure that makes large-scale training and inference possible. This includes high-density racks, advanced cooling systems, high-bandwidth networking, and the power capacity to run clusters of GPUs around the clock.

Traditional hyperscalers are expanding aggressively, but smaller and more specialized AI cloud companies are growing even faster. These firms move quicker and can tailor their architecture specifically for AI workloads.

Nebius ($NBIS)

Terawulf ($WULF)

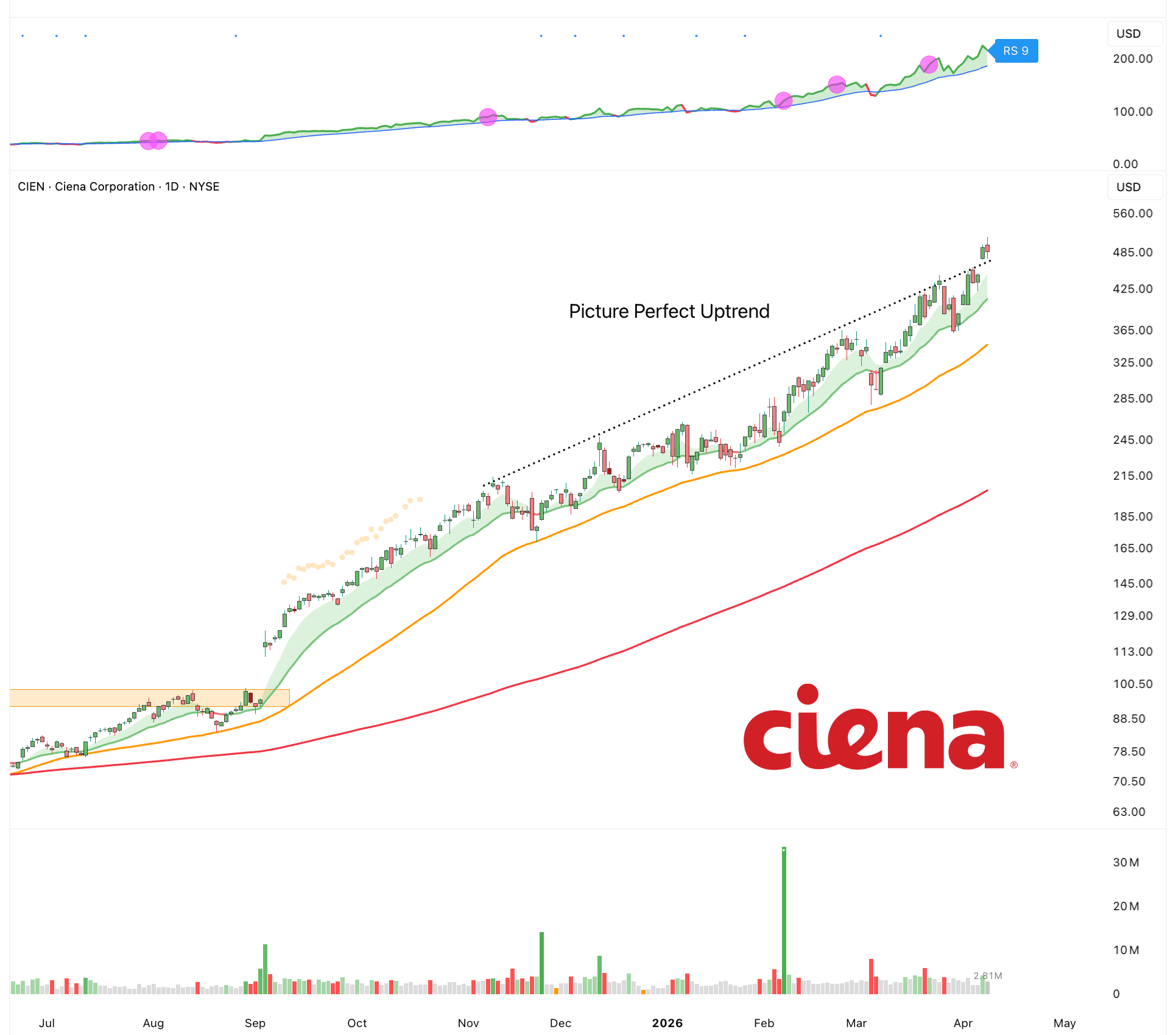

Networking

Moving data between GPUs, servers, and storage systems requires extremely high bandwidth and low latency. Without fast networking, even the most advanced chips sit idle waiting for data.

This is why technologies like high-speed optical interconnects, advanced switches, and specialized network fabrics are seeing strong demand. As models grow and training clusters scale to thousands of GPUs, the bottleneck is no longer just the chip. It is how fast data can move across the system.

High-bandwidth, low-latency networking is required to keep GPUs fully utilized, which is why demand for optical interconnects, advanced switches, and specialized network fabrics is accelerating.

The faster the network, the more efficient the compute.

Networking is not a supporting role. It is a critical layer that directly determines the performance and cost of AI.

Ciena ($CIEN)

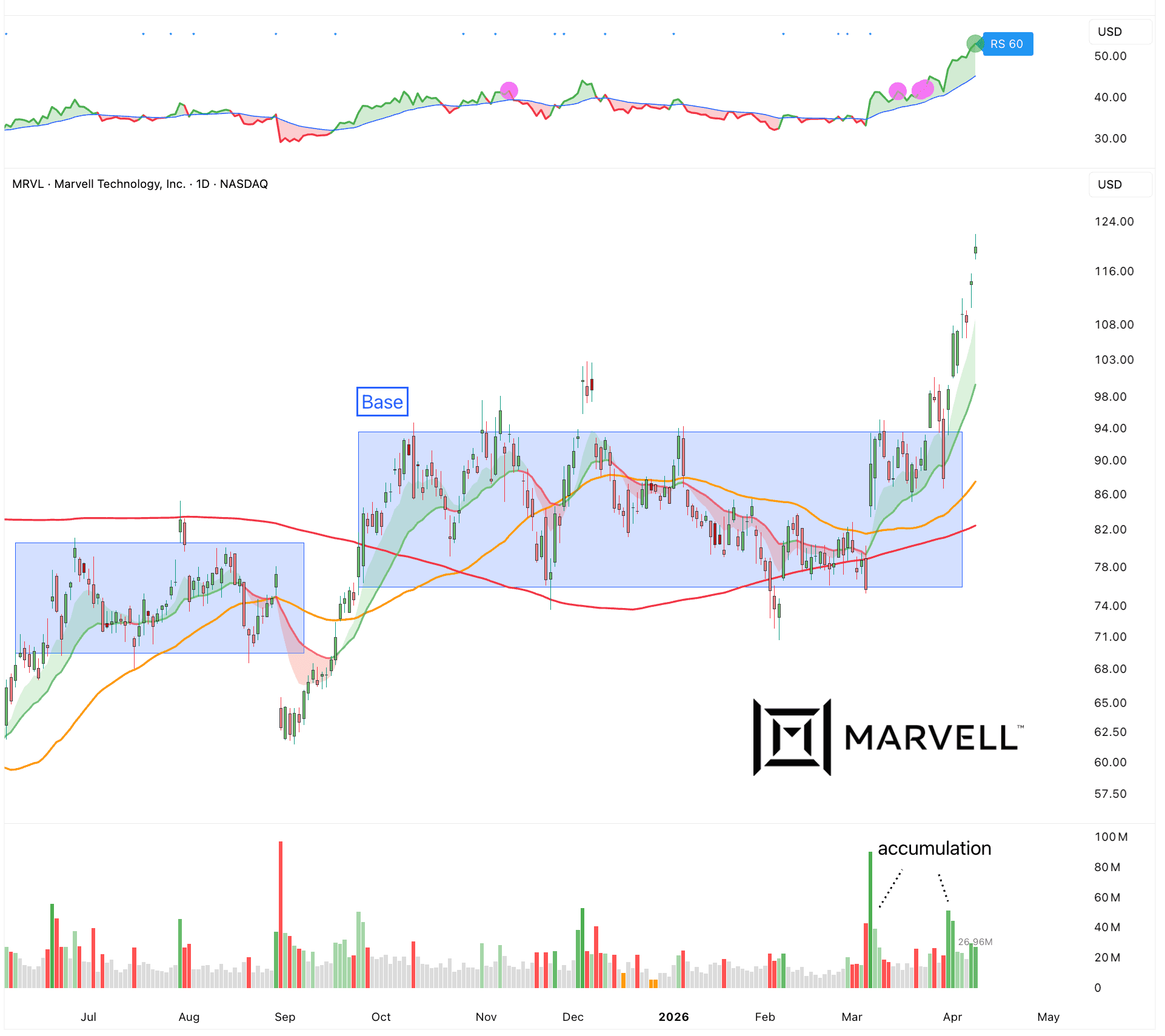

Marvell ($MRVL)

Data Centers

Every app, every AI model, every payment, every video call depends on servers that need constant power, cooling, and uptime. If power fails or systems overheat, everything goes down fast. As AI grows, this becomes even more critical. AI workloads use way more energy and create more heat than normal computing. That means better power systems, liquid cooling, and reliable infrastructure are no longer optional. They are the bottleneck.

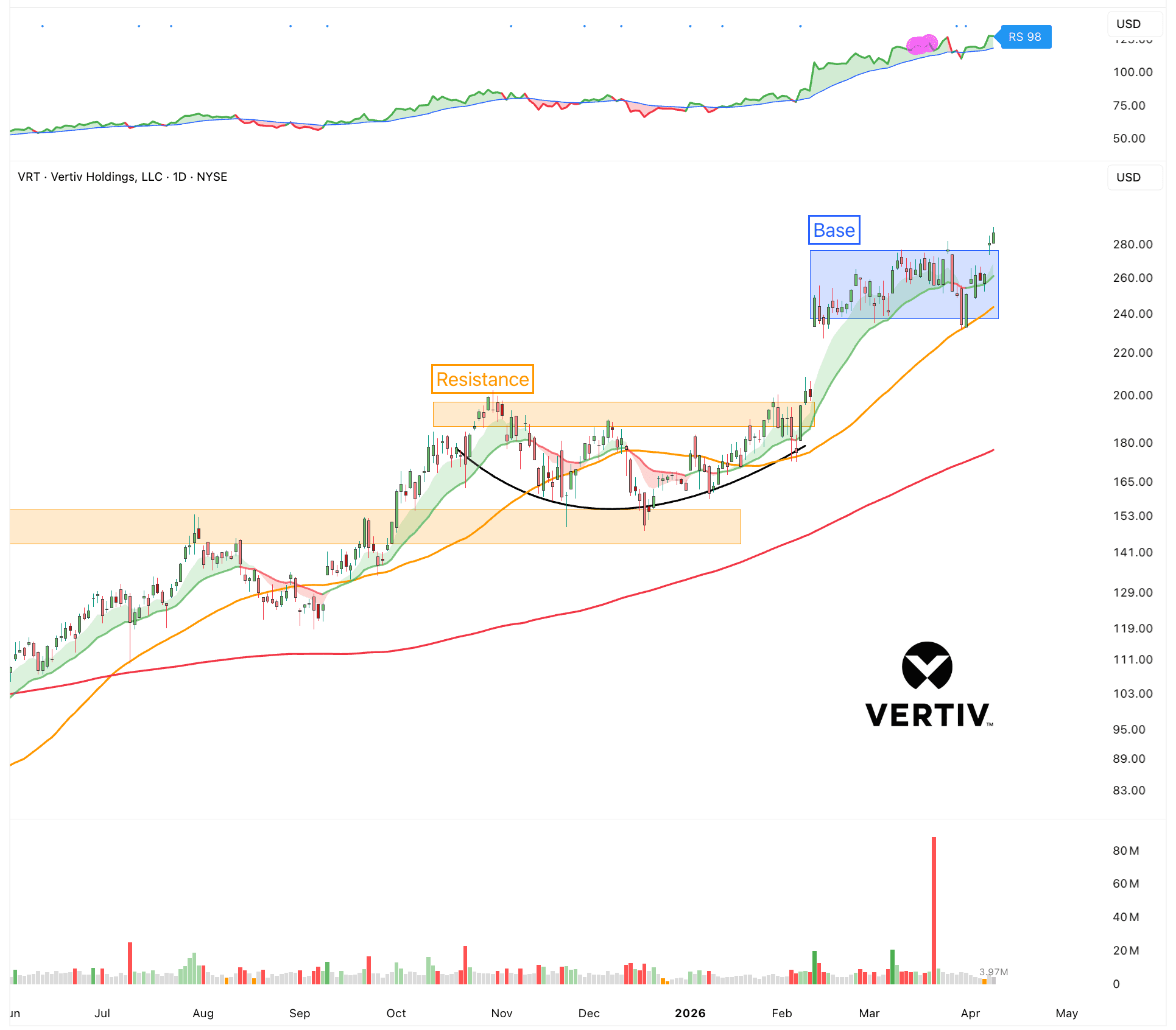

Vertiv ($VRT)

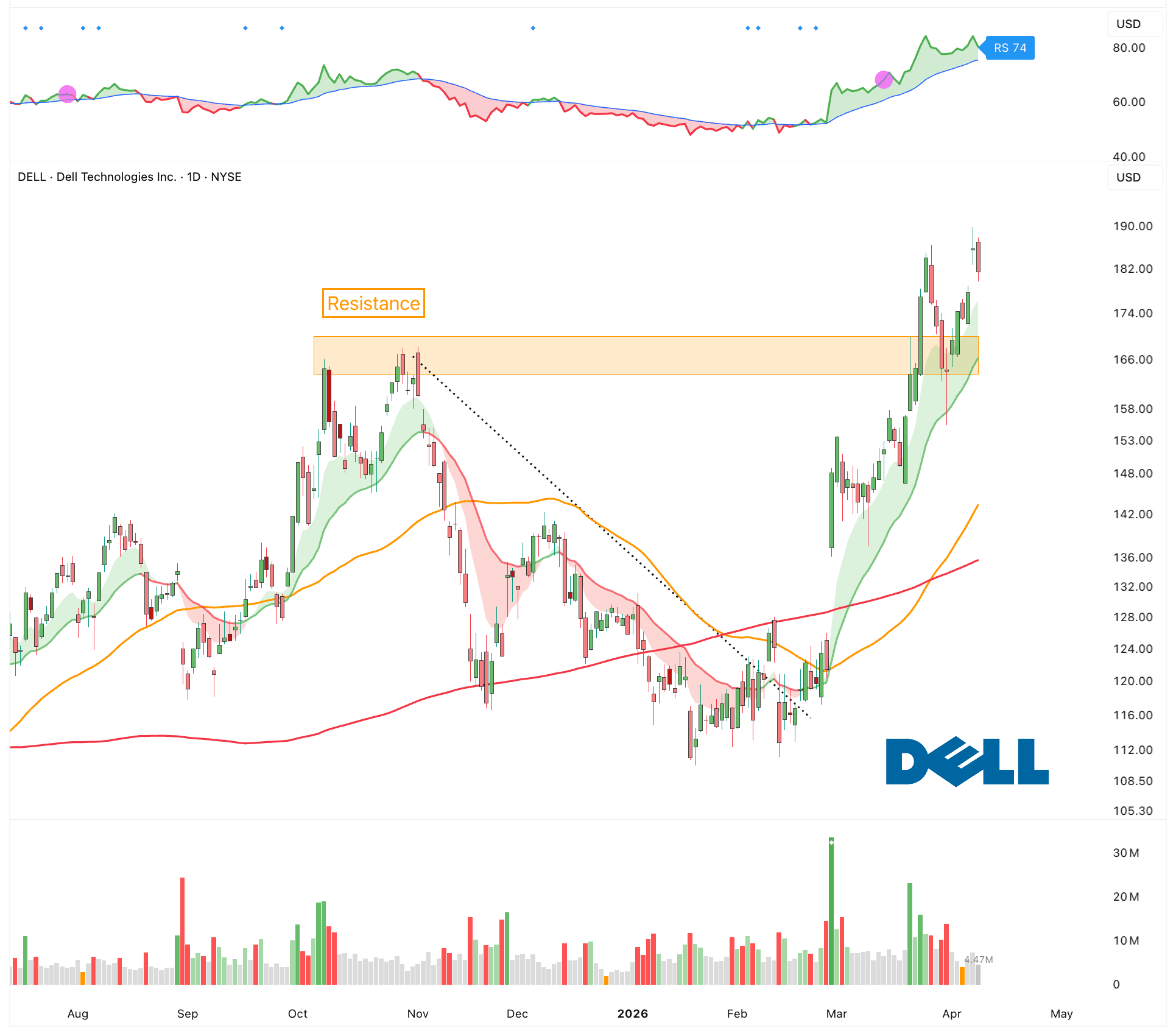

Dell ($DELL)

Semiconductors

Semiconductors remain the foundation of the entire AI ecosystem. Every improvement in model size, speed, and capability depends on more advanced chips and manufacturing processes. Demand for high-performance GPUs, specialized accelerators, memory, and advanced packaging continues to rise as training clusters grow larger and inference moves into real products. At the same time, new fabrication nodes and supply chain capacity are becoming strategic priorities for both companies and governments. Simply put, without continued progress in semiconductors, the AI wave cannot scale.

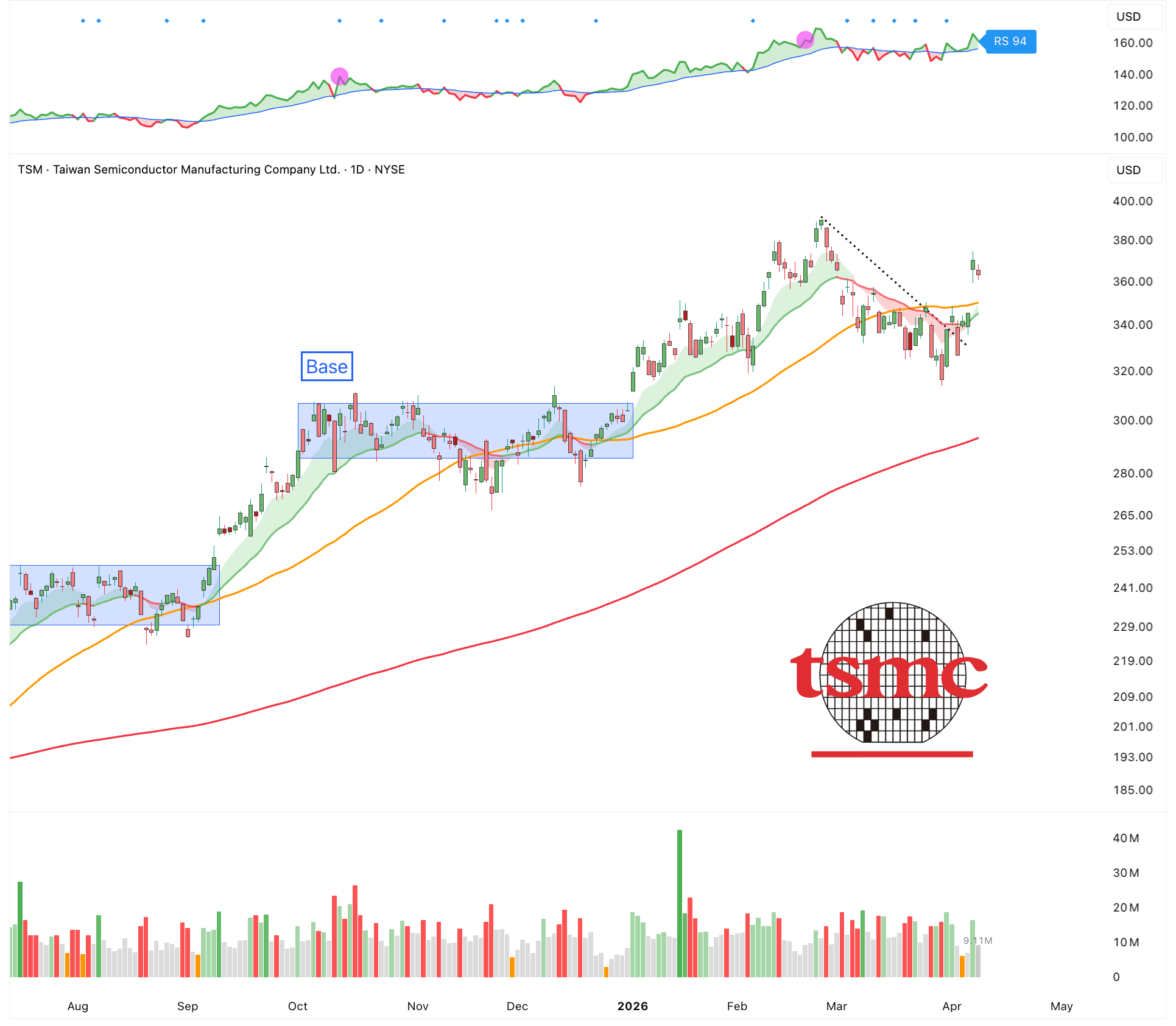

TSMC ($TSM)

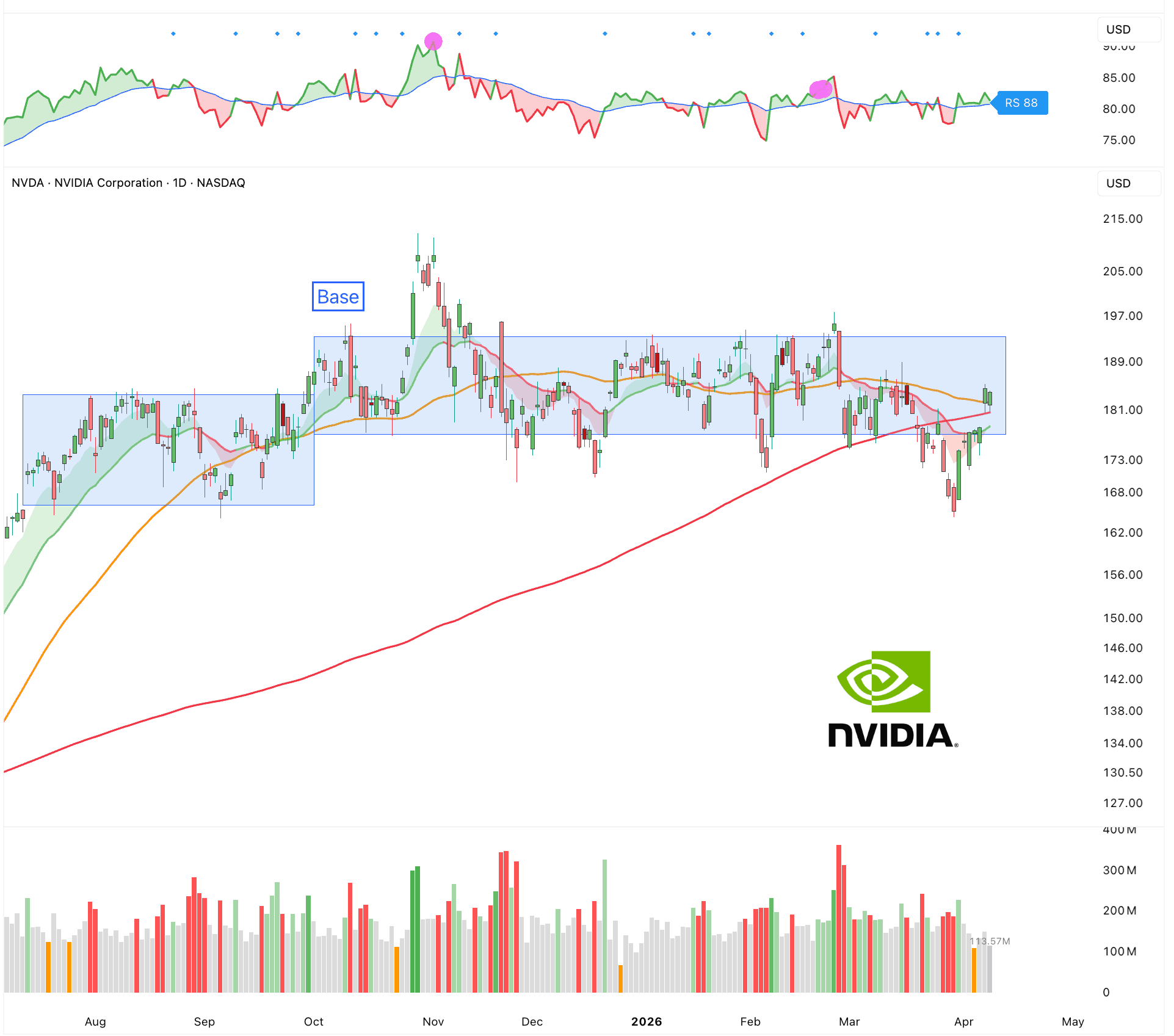

Nvidia ($NVDA)

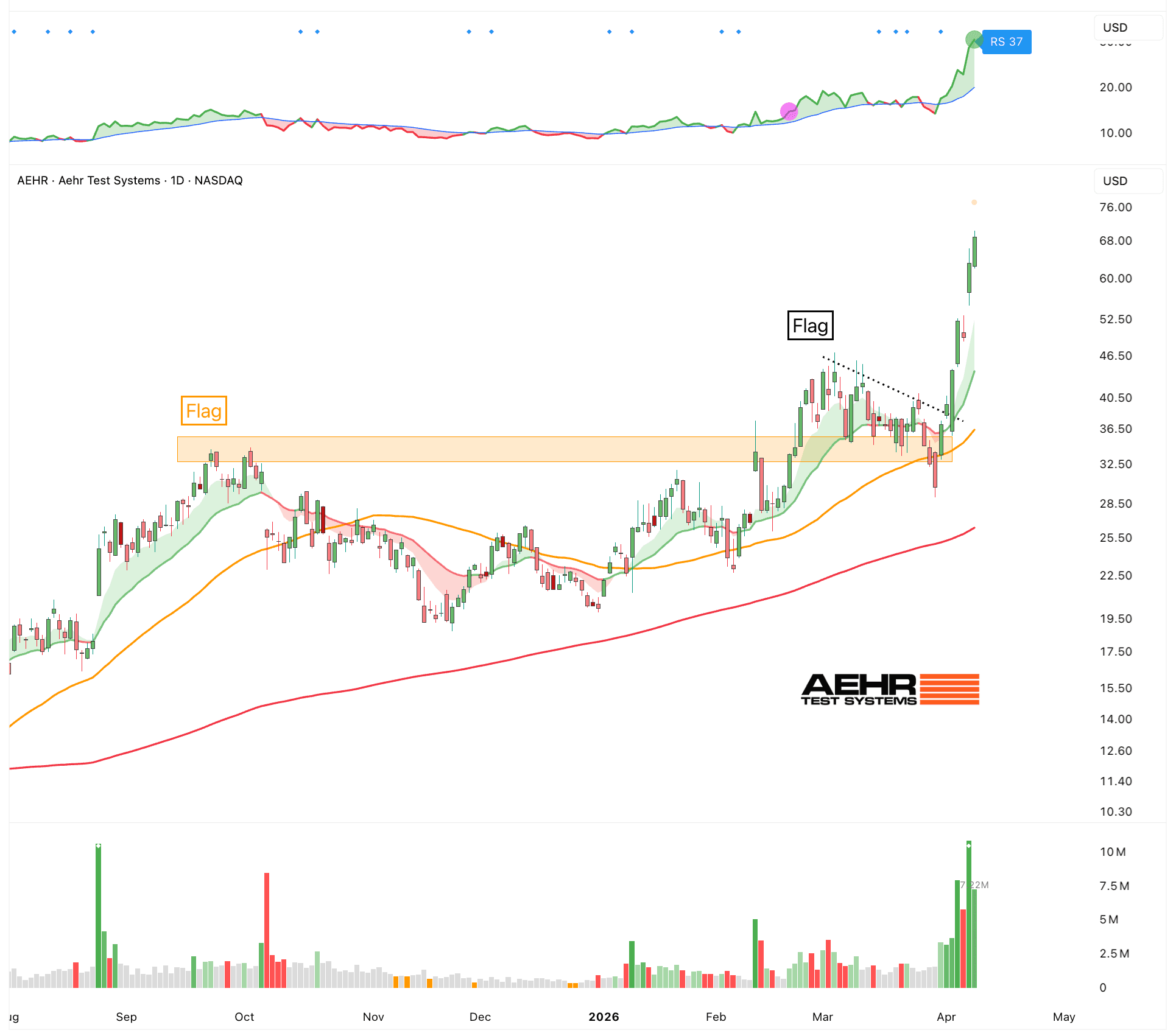

Aehr Test Systems ($AEHR)

Energy

Energy is the core input of computing. Every calculation, every AI model, every database query is just electricity being turned into work. No power means no compute. It is that simple. As workloads grow, especially with AI, the amount of energy needed explodes. Training one large AI model can use as much electricity as thousands of homes. So the limit is no longer just chips. It is also how much energy you can access and deliver reliably.

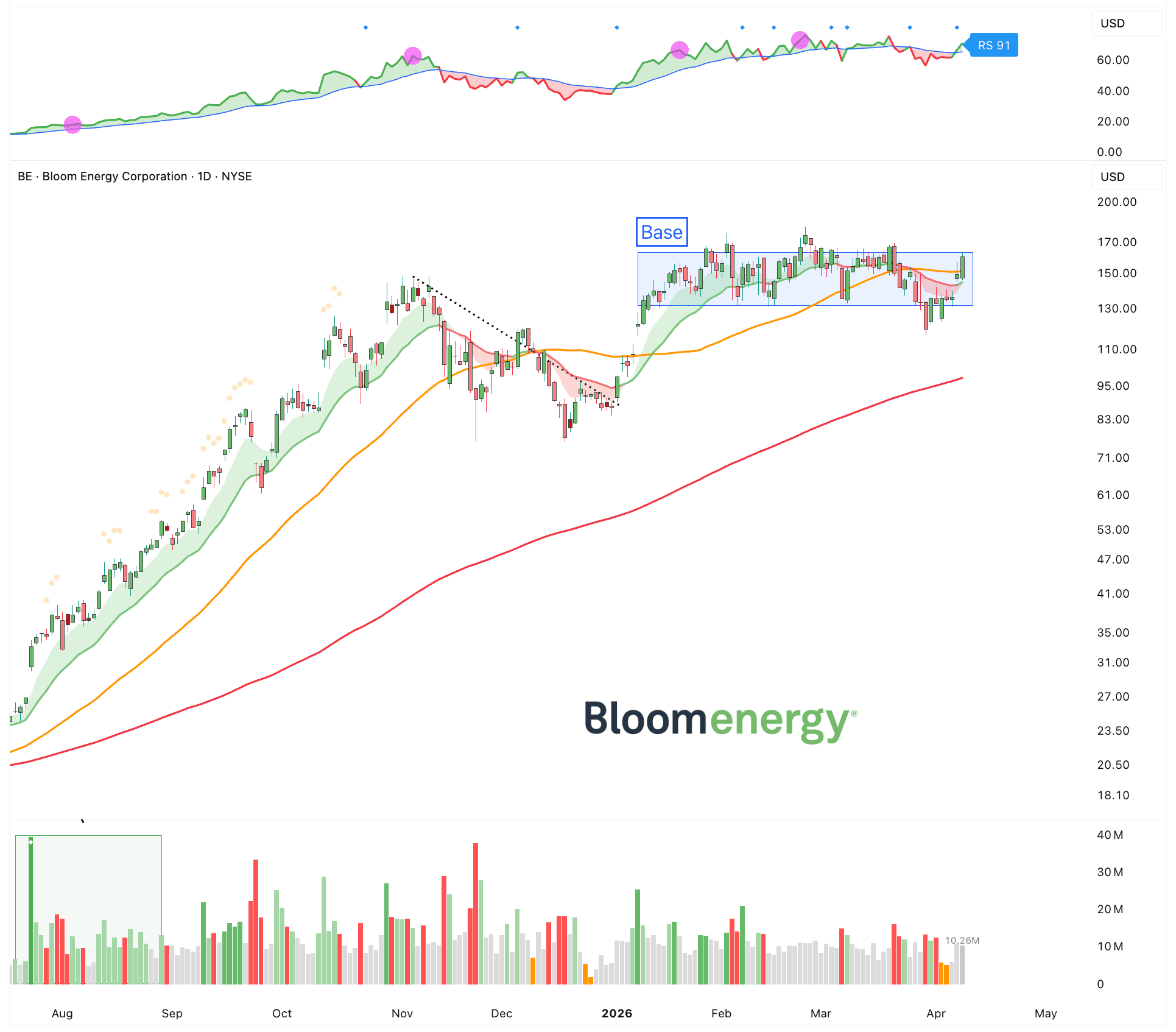

Bloom Energy ($BE)

Photonics

Ais limited by how fast data can move. Inside data centers, chips need to talk to each other constantly. GPUs send massive amounts of data back and forth. Copper cables are hitting their limits. They get hot, lose signal over distance, and cannot scale well at higher speeds. Photonics fixes this by using light instead of electricity. Light moves faster, uses less energy per bit, and works better over longer distances and thus removing a major bottleneck.

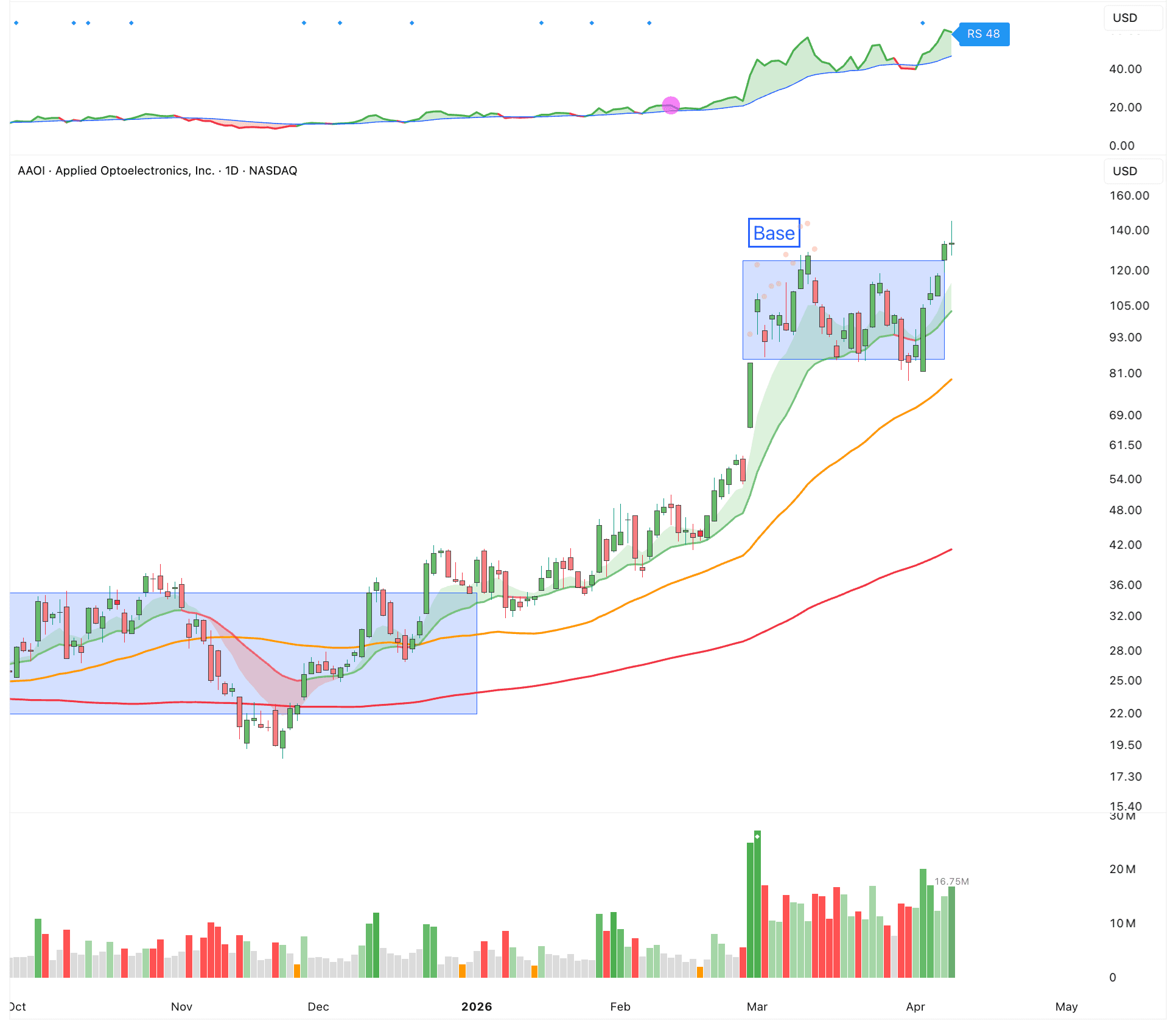

Applied Optoelectronics ($AAOI)

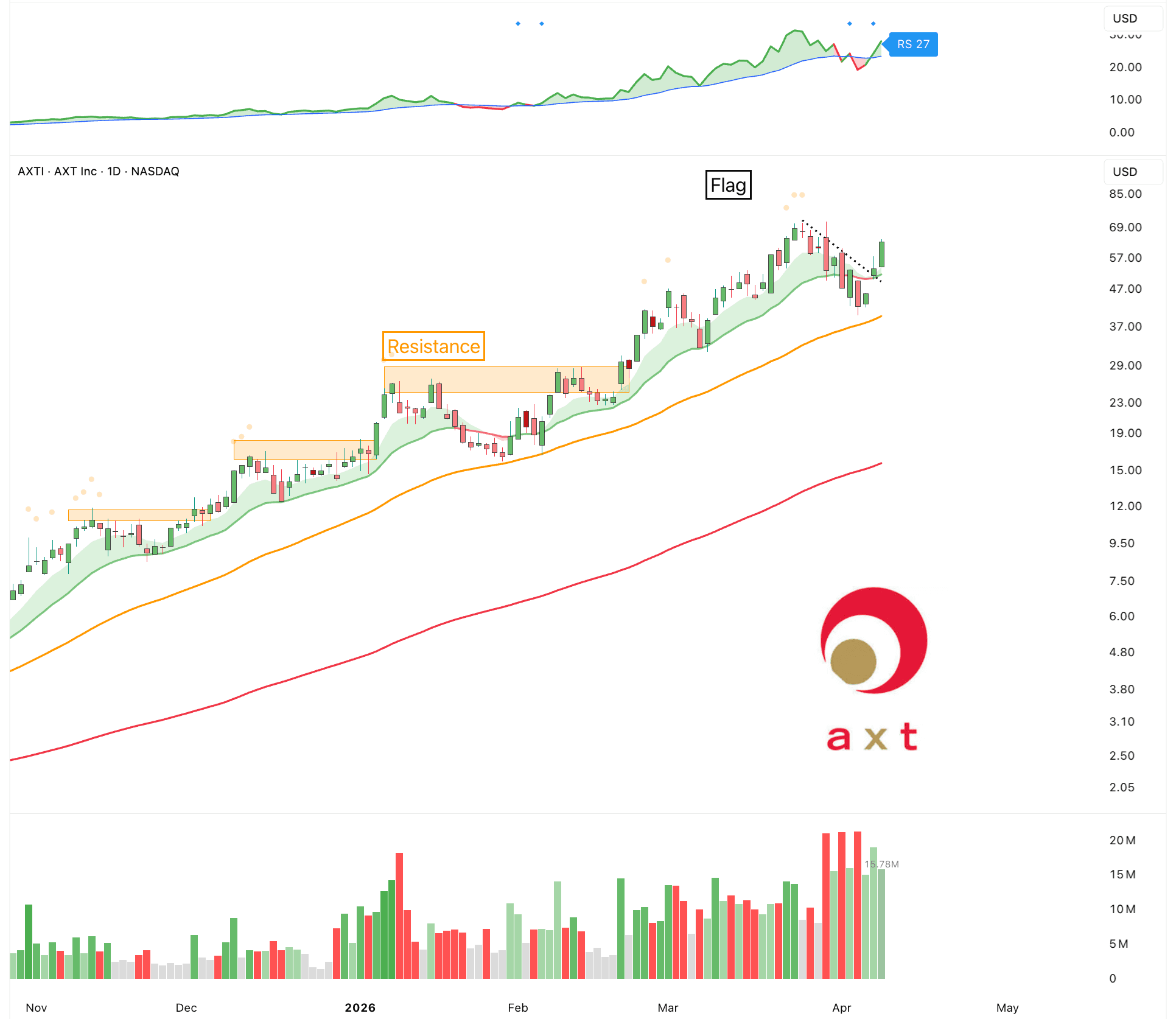

AXT Inc ($AXTI)

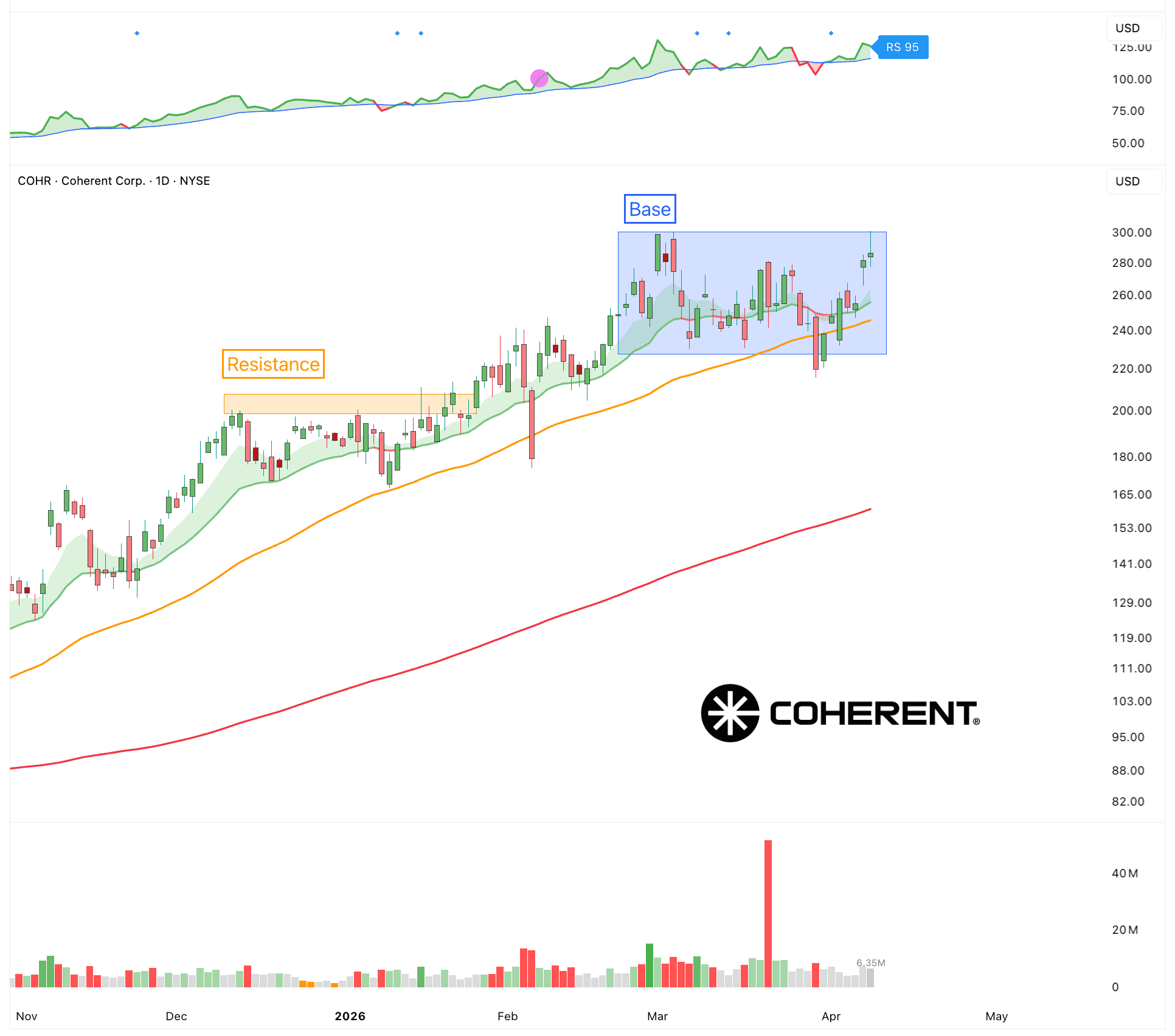

Coherent ($COHR)

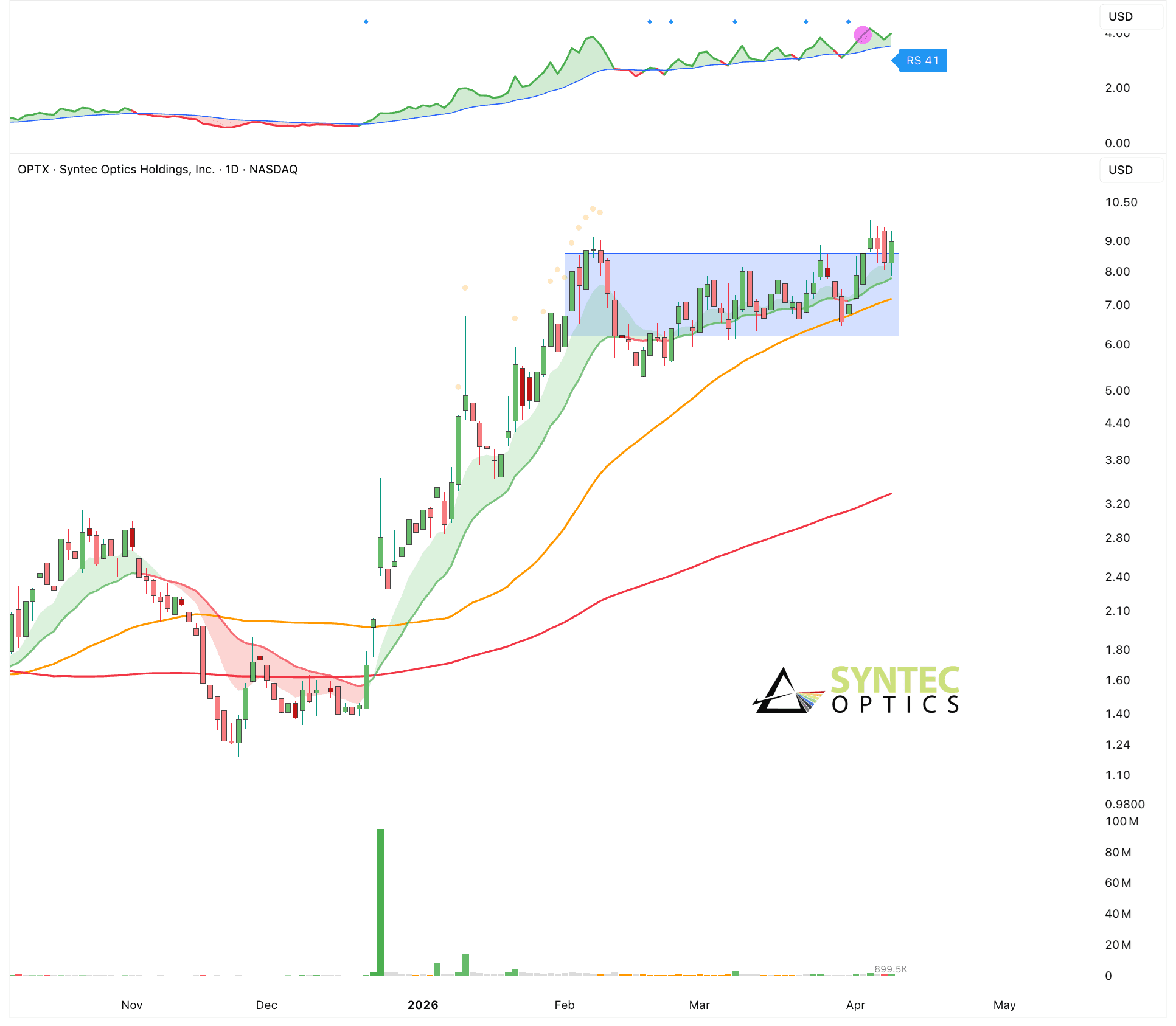

Syntec ($OPTX)

Exposure Level

Guidance:

Hold

0%

100%

Trend Indicator

Long-Term:

Up

Intermediate-Term:

Up

Short-Term:

Down

Risk Indicators

Volatility:

High

Sentiment:

Neutral

Momentum:

Neutral

Leading Sectors

View All

Energy

Memory

Photonics

Semiconductors

AI Infrastructure